Embeddings in NLP: How Machines Turn Words into Meaning

The Core Problem: Computers Don’t Speak Human

Before we talk about embeddings in NLP, we need to understand the problem they solve. Computers are, at heart, number-crunching machines. They process integers, floats, matrices. Words are not numbers. So the very first challenge in natural language processing is deceptively simple: how do you represent a word as a number?

The obvious first answer is: just assign each word a number. Cat = 1. Dog = 2. King = 3. Queen = 4. Done, right?

Not even close. The problem with raw integer encoding is that it implies a mathematical relationship that doesn’t exist. Is ‘dog’ twice ‘cat’? Is ‘queen’ the average of ‘king’ and ‘dog’? Of course not. Arbitrary integers carry arbitrary relationships, and machine learning models will try to exploit every pattern they can find including meaningless ones.

The First Real Attempt: One-Hot Encoding

The classical solution to arbitrary integer encoding was one-hot vectors. The idea is elegant in its simplicity. Imagine your entire vocabulary has 10,000 words. Each word gets represented as a vector of length 10,000, with a single 1 in its position and 0s everywhere else.

Example: If ‘cat’ is word number 42 in your vocabulary, its one-hot vector is a 10,000-dimensional vector with a 1 at position 42 and 0s everywhere else.

This solves the false-relationship problem. No word is numerically ‘larger’ or ‘closer’ to another in an arbitrary sense. Every word is exactly 1 unit away from every other word.

But this creates a new, deeper problem.

Why One-Hot Encoding Fails

- No semantic meaning: The words ‘cat’ and ‘kitten’ are just as far apart as ‘cat’ and ‘democracy.’ The vectors capture nothing about word relationships.

- Catastrophic dimensionality: Real-world vocabularies contain hundreds of thousands of words. A 300,000-word vocabulary means every word is a 300,000-dimensional vector almost entirely zeros. This is computationally brutal.

- No generalisation: A model trained on ‘I love my cat’ learns nothing useful for ‘I love my kitten,’ because the two vectors share no information.

The field needed something fundamentally different. It needed a way to represent words such that similar words live close together in mathematical space. That idea is the heart of embeddings in NLP.

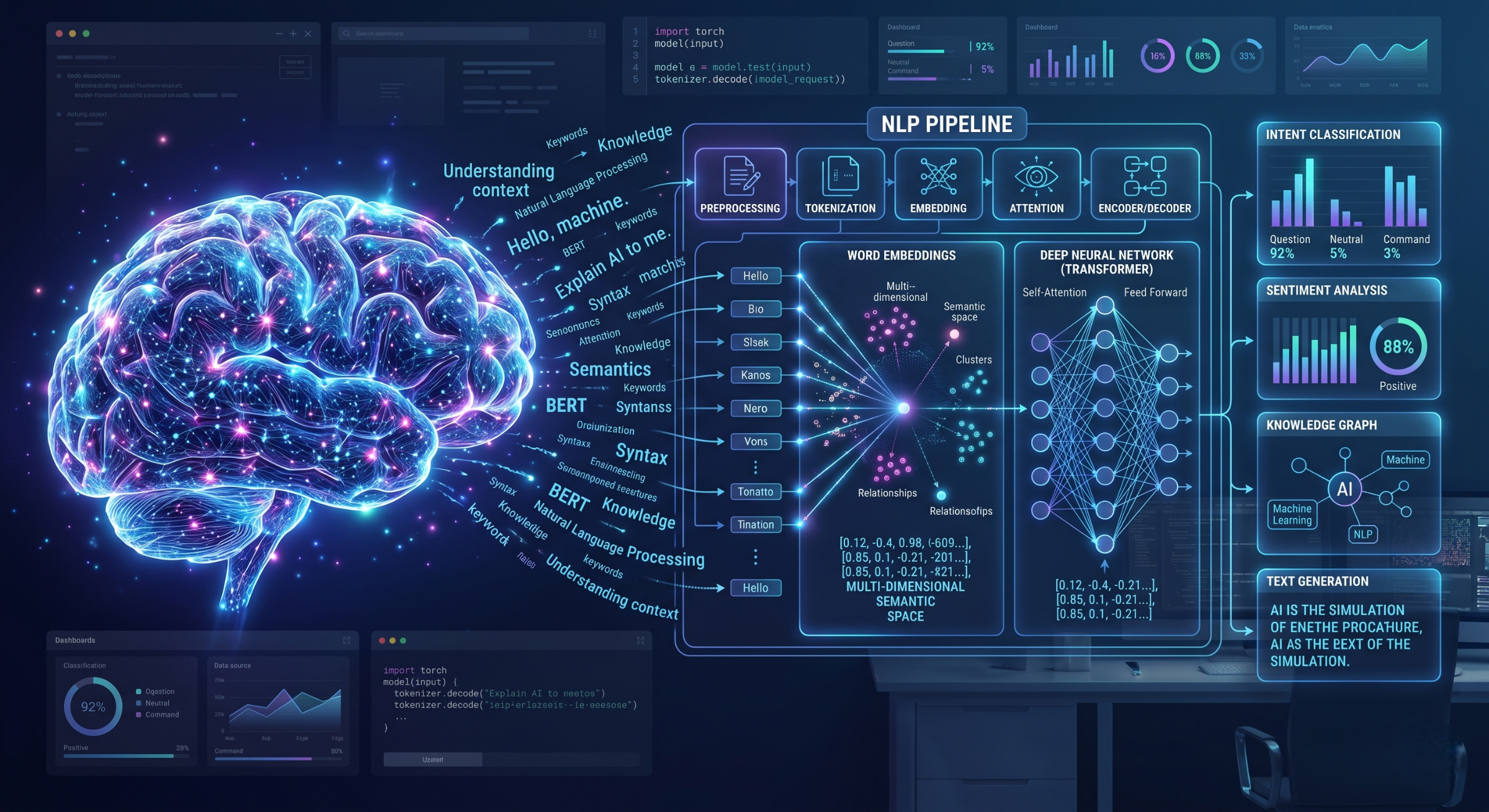

What Are Embeddings in NLP? The Core Intuition

An embedding is a dense, low-dimensional vector representation of a word (or sentence, or document) that captures semantic meaning through its position in a continuous vector space.

Let’s unpack that slowly.

Dense: Instead of a 300,000-dimensional vector of mostly zeros, an embedding might be a 300-dimensional vector where every single value carries information.

Low-dimensional: 300 dimensions instead of 300,000. That’s a 1,000x compression, and it still captures more meaningful structure.

Semantic meaning through position: Here’s the magic. In embedding space, words with similar meanings end up near each other. ‘Cat’ and ‘kitten’ are neighbours. ‘King’ and ‘queen’ are neighbours. ‘Python’ (the snake) and ‘Python’ (the language) end up in different neighbourhoods depending on context.

The Idea That Started It All: The Distributional Hypothesis

Every embedding method from the earliest to the most modern rests on one foundational linguistic insight, articulated by J.R. Firth in 1957:

“You shall know a word by the company it keeps.”

This is the distributional hypothesis: words that appear in similar contexts tend to have similar meanings. ‘Dog’ and ‘cat’ both appear near words like ‘pet,’ ‘feed,’ ‘fur,’ ‘owner.’ So statistically, they should have similar representations.

Every embedding technique is essentially an algorithm for operationalising this hypothesis at scale. The difference between methods is how they measure and encode ‘company.’

Word2Vec: The Breakthrough That Changed NLP

In 2013, a team at Google led by Tomas Mikolov published a paper that sent shockwaves through the research community. Word2Vec didn’t just propose better word representations — it demonstrated something almost surreal: that word embeddings could capture analogy relationships through simple vector arithmetic.

king − man + woman ≈ queen

That single equation made headlines in machine learning circles. It meant the embedding space had learned something structurally real about gender relationships in language without anyone explicitly programming it.

How Word2Vec Actually Works

Word2Vec comes in two architectures, both trained as shallow neural networks on massive text corpora:

- CBOW (Continuous Bag of Words): Given the surrounding context words, predict the centre word. Fast to train, works well with frequent words.

- Skip-gram: Given a centre word, predict the surrounding context words. Slower but better at capturing rare words and subtle relationships.

The key insight is that Word2Vec never directly optimises for ‘good embeddings.’ It optimises for a prediction task, and the embeddings emerge as a useful by-product of solving that task well. The weights of the network’s hidden layer become the word vectors.

You can explore pre-trained Word2Vec models via Gensim, which remains one of the most practical libraries for working with static embeddings.

GloVe: Global Vectors for Word Representation

A year after Word2Vec, Stanford researchers introduced GloVe (Global Vectors for Word Representation). The core critique of Word2Vec was that it learned from local context windows a few words either side of the target and therefore missed global statistical patterns across the entire corpus.

GloVe took a different approach: it first built a global co-occurrence matrix capturing how often every word pair appears together across the entire dataset, then factorised that matrix to produce embeddings. The result was that GloVe vectors explicitly encoded corpus-wide statistical relationships, not just local window patterns.

Word2Vec vs GloVe: Key Differences

| Feature | Word2Vec | GloVe |

| Training signal | Local context window | Global co-occurrence matrix |

| Architecture | Shallow neural network | Matrix factorisation |

| Speed | Faster on large corpora | Faster once matrix is built |

| Analogy tasks | Excellent | Excellent |

| Rare words | Skip-gram handles well | Struggles slightly |

| Interpretability | Low | Moderate (count-based) |

The Big Limitation: Static Embeddings Don’t Understand Context

Both Word2Vec and GloVe produce what are called static embeddings: each word gets exactly one vector, regardless of context. This works brilliantly for many tasks, but it has a fundamental flaw that researchers couldn’t ignore for long.

Consider the word ‘bank.’

- “She sat on the river bank.”

- “He deposited the cheque at the bank.”

In a Word2Vec model, ‘bank’ has a single vector some muddled average of its financial and geographical senses. The model cannot distinguish them. For simple tasks, this is tolerable. For reading comprehension, translation, or question answering, it is a serious limitation.

The field needed embeddings that were sensitive to context. Enter transformers.

Contextual Embeddings in NLP: ELMo, BERT, and Beyond

The shift to contextual embeddings is one of the most significant turning points in NLP research history. Rather than assigning one fixed vector per word, contextual embedding models generate a different vector for each occurrence of a word based on its full sentence context.

ELMo (2018) — The First Major Leap

ELMo (Embeddings from Language Models), from AllenNLP, was the first widely adopted contextual embedding model. It used a bidirectional LSTM trained as a language model, producing representations that captured both left and right context simultaneously. ELMo embeddings plugged into existing NLP pipelines and improved nearly every benchmark they touched.

BERT (2018) — The Game Changer

Google’s BERT (Bidirectional Encoder Representations from Transformers) took contextual embeddings to an entirely new level. Trained on masked language modelling and next sentence prediction, BERT produced representations so rich that fine-tuning it on a downstream task with minimal additional architecture set new state-of-the-art results across eleven NLP benchmarks simultaneously.

BERT embeddings are not a lookup table like Word2Vec. They are the output of running a full transformer encoder on your input. This means the embedding for ‘bank’ in a financial context is a completely different vector from ‘bank’ in a geographical one. Explore BERT via HuggingFace Transformers the most comprehensive library for working with contextual embeddings today.

Types of Embeddings in NLP: A Quick Reference

| Method | Type | Year | Best Used For |

| One-Hot | Static | Pre-2013 | Baseline / tiny vocabularies |

| Word2Vec | Static | 2013 | Semantic similarity, analogy tasks |

| GloVe | Static | 2014 | Global corpus statistics, fast inference |

| FastText | Static | 2016 | Morphologically rich languages, rare words |

| ELMo | Contextual | 2018 | Context-sensitive tasks, plug-in improvement |

| BERT | Contextual | 2018 | Classification, NER, QA, fine-tuning |

| GPT-family | Contextual | 2018+ | Generation, few-shot learning, agents |

| Sentence-BERT | Contextual | 2019 | Semantic search, sentence similarity |

Where Embeddings in NLP Are Actually Used

Understanding embeddings in NLP is not just a theoretical exercise. They are the invisible infrastructure behind most language technology you use every day.

- Search engines: Google’s semantic search uses embeddings to return results that are conceptually relevant, not just keyword-matched.

- Recommendation systems: Spotify, Netflix, and Amazon embed items and users into shared vector spaces to find neighbours.

- Machine translation: Encoder-decoder models use embeddings to translate between languages by mapping them through a shared semantic space.

- Sentiment analysis: Classifiers trained on top of embeddings can detect tone, emotion, and opinion in text at scale.

- Named entity recognition (NER): Contextual embeddings dramatically improve the ability to identify people, places, and organisations in text.

- RAG systems: Retrieval-Augmented Generation pipelines use embeddings to find the most semantically relevant documents to feed into an LLM.

How to Start Working with Embeddings in NLP: Practical Starting Points

Theory is one thing. Here’s where to get your hands dirty:

- Gensim (Word2Vec, GloVe, FastText) : The best library for static embeddings. Load pre-trained vectors or train on your own corpus in a few lines.

- HuggingFace Transformers : The go-to for contextual embeddings. Access BERT, RoBERTa, Sentence-BERT, and hundreds more with a unified API.

- Sentence-Transformers library: Purpose-built for producing high-quality sentence and paragraph embeddings. Ideal for semantic search projects.

- TensorFlow Embedding Projector: A beautiful free tool to visualise your embedding space in 3D. Indispensable for building intuition.

- Kaggle NLP competitions: Nothing accelerates learning like applying embeddings to a real task with a leaderboard.

Limitations and Open Research Problems

No survey of embeddings in NLP is complete without acknowledging what they still get wrong. For researchers, this is where the interesting work lives.

- Bias amplification: Embeddings trained on large internet corpora absorb and amplify societal biases. Word2Vec famously associated ‘man’ with ‘doctor’ and ‘woman’ with ‘nurse.’ Debiasing embeddings remains an active research area.

- Anisotropy: Research has shown that embedding spaces tend to be highly anisotropic vectors cluster in a narrow cone rather than spanning the space uniformly. This limits their expressiveness and is poorly understood.

- Interpretability: Individual dimensions of an embedding vector rarely correspond to human-interpretable features. What does dimension 147 represent? We often don’t know.

- Cross-lingual transfer: How to build embeddings that work across multiple languages with minimal parallel data is still an unsolved problem at scale.

- Domain shift: Embeddings trained on Wikipedia perform poorly on legal contracts or medical literature. Domain adaptation of embeddings is an important applied research challenge.

From Numbers to Meaning: What Embeddings in NLP Really Represent

There’s something quietly remarkable about what embeddings in NLP have achieved. We started with an almost laughably hard problem: how do you give a number-crunching machine a sense of language? And the answer encode words as points in a carefully structured geometric space, such that meaning is encoded as distance and direction turned out to be not just workable but transformative.

Every time you use a search engine that understands what you meant rather than just what you typed, every time a translation app captures your sentence’s nuance rather than word-for-word, every time an autocomplete feels unnervingly apt embeddings are doing quiet, invisible work.

For students and researchers entering this field, embeddings are not just a technique to memorise. They are a way of thinking about language computationally and mastering that thinking is what separates practitioners who use NLP tools from those who push the field forward.

The geometry of language is real. Embeddings are how we mapped it.

Frequently Asked Questions About Embeddings in NLP

What are embeddings in NLP?

Embeddings in NLP are dense, low-dimensional vector representations of words, sentences, or documents that capture semantic meaning through their position in a continuous mathematical space. Words with similar meanings end up geometrically close to each other in this space.

What is the difference between Word2Vec and BERT embeddings?

Word2Vec produces a single static vector per word regardless of context. BERT produces a different vector for every occurrence of a word, depending on the full sentence around it. This means BERT can distinguish between ‘bank’ (financial) and ‘bank’ (river), while Word2Vec cannot.

Why are embeddings important in NLP?

Embeddings are important in NLP because they allow machine learning models to work with language numerically while preserving semantic relationships. Without embeddings, NLP models have no way to understand that ‘cat’ and ‘kitten’ are related, or that ‘happy’ and ‘joyful’ are synonyms.

What is the best embedding model for NLP in 2026?

For sentence-level tasks like semantic search and clustering, Sentence-BERT (via the sentence-transformers library) is the practical gold standard in 2026. For fine-tuning on classification or NER, BERT and its variants (RoBERTa, DeBERTa) remain highly competitive. For generative tasks, embeddings from GPT-family models are widely used.

How do I visualise word embeddings?

The best tool for visualising embeddings in NLP is the TensorFlow Embedding Projector (projector.tensorflow.org), which lets you explore high-dimensional embedding spaces using PCA or t-SNE dimensionality reduction in a browser-based 3D interface. Alternatively, use scikit-learn’s TSNE class with Matplotlib for custom visualisations.

What to Explore Next

If this post has given you a clearer picture of how embeddings in NLP work, the logical next step is to get hands-on. Load a pre-trained Word2Vec model in Gensim, run some analogy queries, and watch the geometry come alive. Then move to Sentence-BERT and build a simple semantic search engine over a dataset you care about.

The concepts will solidify the moment you write the code.

Found this useful? Share it with a fellow student or researcher who’s wrestling with how language models actually work. And if you want to go deeper, check out the Stanford CS224N NLP course the best free deep-dive into NLP available anywhere.

Continue Your NLP Journey

If you found this guide helpful, you might also be interested in exploring how these concepts are applied in real-world scenarios.

In my previous blog, I walk through a complete NLP project, where I demonstrate how to transform raw text data into meaningful insights using practical techniques and tools. It covers everything from data preprocessing to model building and evaluation—giving you a hands-on perspective of Natural Language Processing in action.

👉 Read the full project here: Click me!

This will help you connect theory with implementation and deepen your understanding of NLP workflows.