Asymptotic Notations

Understanding Algorithm Efficiency: Asymptotic Notations

Asymptotic notation is a fundamental mathematical tool used in computer science to describe how fast an algorithm runs as the input size (n) becomes very large.

Simple Analogy: Imagine two people traveling from City A to City B. Person 1 walks, which takes many hours, while Person 2 drives a car, taking much less time. Similarly, asymptotic notation tells us which algorithm is “faster” for large inputs, regardless of the computer’s underlying hardware.

Why Do We Need It?

Using asymptotic notations allows us to compare algorithms without depending on machine speed, programming language, or hardware specifications. Specifically, we use it to:

- Measure time complexity to see how execution time grows with the input size.

- Measure space complexity to see how memory usage grows with the input size.

- Compare algorithms efficiently to determine which is better for large datasets.

- Ignore small details and focus purely on the growth rate, rather than constants.

The Key Idea: We focus heavily on large values of n (what happens when data grows), evaluate worst, best, or average performance, and completely ignore constants and small terms.

Example: If an algorithm’s time equals 3n^2 + 5n + 10, we only care about n^2 because it grows the fastest. Therefore, we write its complexity as O(n²).

Types of Asymptotic Notation

There are three main types of notations used to bound algorithm running times:

|

Notation |

Name |

Represents |

Use |

|

|

Big O (O) |

Big O |

Worst Case |

Upper Bound |

|

|

Omega (Omega) |

Big Omega |

Best Case |

Lower Bound |

|

|

Theta (Theta) |

Big Theta |

Average Case |

Tight Bound |

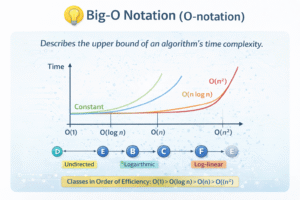

1️⃣ Big O Notation (O)

- Meaning: Represents the worst-case time complexity, acting as an upper bound. It signifies the “maximum time an algorithm can take”

- Definition: An algorithm is O(f(n)) if its running time does not exceed $c \times f(n) for large values of n, where c is a positive constant.

- Mathematical Form: T(n) lec times f(n) for nge n_0.

- Example Calculation: If T(n) = 3n^2 + 5n + 10, you drop the constants (3n^2 rightarrow n^2) and lower order terms (5n and 10) to get a final result of O(n²).

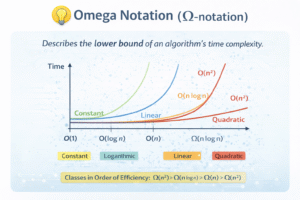

2️⃣ Omega Notation (Omega)

- Meaning: Represents the best-case time complexity, acting as a lower bound. It signifies the “minimum time required”

- Definition: An algorithm is Omega(f(n)) if it takes at least c times f(n) time for large n.

- Mathematical Form: T(n) ge c times f(n) for nge n0.

- Example: When searching in a sorted array, the best case is finding the element at the first position, which takes Omega(1) time because only 1 comparison is needed.

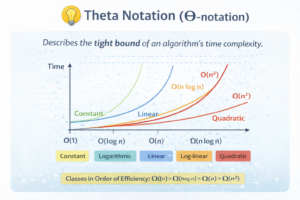

3️⃣ Theta Notation (Theta)

- Meaning: Represents the average-case time complexity and provides a tight bound. It acts as the “exact growth rate”.

- Definition: An algorithm is Theta(f(n)) if it is both O(f(n)) and Omega(f(n)).

- Mathematical Form: c1 times f(n) le T(n) lec2 times f(n) for nge n0.

- Example: If T(n) = 2n^2 + 3n + 1, the average growth is Theta(n^2). Another example is Merge Sort, where the best, average, and worst cases are all Theta(n \log n) because the algorithm always takes the same pattern of time.

Common Time Complexities

Here are the most common time complexities ranked from fastest to slowest:

|

Notation |

Name |

Example |

Performance |

n=100 |

|

|

O(1) |

Constant |

Array access |

Excellent |

1 |

|

|

O(log n) |

Logarithmic |

Binary search |

Excellent |

7 |

|

|

O(n) |

Linear |

Linear search |

Good |

100 |

|

|

O(n log n) |

Linearithmic |

Merge sort |

Good |

664 |

|

|

O(n²) |

Quadratic |

Bubble sort |

Fair |

10,000 |

|

|

O(2ⁿ) |

Exponential |

Fibonacci |

Bad |

1.26 \times 10^{30} |

Note: Order of growth is: O(1) < O(log n) < O(n) < O(n log n) < O(n²) < O(2ⁿ).

Rules for Calculating Time Complexity

To find the time complexity, you should count the loops, ignore constants, focus on the largest term, and write the final complexity using O, Omega, or Theta.

- Rule 1: Drop Constants. If T(n) = 5n + 3, drop 5 and 3 to get a result of O(n).

- Rule 2: Drop Lower Order Terms. If T(n) = n^2 + 5n + 100, keep only the highest term (n^2) for a result of O(n²).

- Rule 3: Different Inputs Use Different Variables. If you have separate loops for variables n and m, the result is O(n + m), not O(n).

- Rule 4: Nested Loops Multiply. If you have nested loops running n and m times, the result is O(n times m).

- Quick tip: One loop is O(n), nested loops are O(n²), and a loop dividing by 2 is O(log n).

Space Complexity

Space complexity measures how much extra memory an algorithm uses as the input size grows.

- O(1) Space: Occurs when only a constant number of variables are used, regardless of input size (e.g., storing a result variable for a + b).

- O(n) Space: Occurs when memory usage scales linearly, such as creating a new array of size n.