Feature Engineering

Welcome back to your Machine Learning journey! Today, we are tackling one of the most critical, creative, and time-consuming parts of building any AI model: Feature Engineering.

Let’s break it down step-by-step so you can see exactly why data scientists spend so much of their time on this.

What is Feature Engineering?

Think of machine learning like cooking a gourmet meal.

- Your algorithm is the oven.

- Your raw data is your bag of groceries straight from the store.

If you just throw unpeeled onions, raw flour, and whole eggs into the oven, you won’t get a cake. Feature Engineering is the prepping phase—it’s the washing, peeling, chopping, and measuring of your ingredients so the oven can actually bake something delicious.

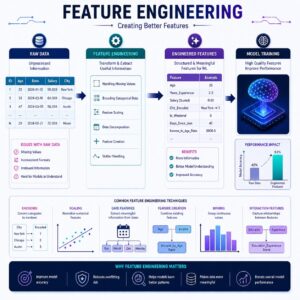

In technical terms, feature engineering is the process of selecting, manipulating, and transforming raw data into “features” (variables or columns) that make it easier for your machine learning model to understand and learn from.

Shutterstock

Connecting the Dots: “Garbage In, Garbage Out”

In previous lessons, you likely learned about different algorithms (like linear regression or decision trees) and how they find patterns in data.

But here is a fundamental truth about machine learning: Models are not magic. They only know what you show them. If you feed an algorithm messy, irrelevant, or confusing data, it will give you bad predictions. In the data science world, this is called “Garbage In, Garbage Out” (GIGO). Feature engineering is how we make sure we are feeding our models high-quality, easily digestible information.

The Step-by-Step Flow of Feature Engineering

Here is the logical flow of how a data scientist engineers features, using a real-world example: Predicting House Prices.

1. Handling Missing Data (Imputation)

Real-world data is messy. Sometimes people forget to fill out forms, or sensors break.

- The Problem: Your housing dataset has 100 houses, but 10 of them are missing the “Number of Bathrooms” value. Most models will crash if they see a blank space.

- The Fix: You can either delete those 10 houses (losing valuable data) or “impute” (fill in) the blanks. You might fill those blanks with the average number of bathrooms in that specific neighborhood.

2. Encoding Categorical Variables

Machine learning models are essentially giant math equations. They only understand numbers, not text.

- The Problem: Your dataset has a column for “Neighborhood” with text values like Downtown, Suburbs, and Countryside.

- The Fix: We have to translate this into math. We might use a technique called One-Hot Encoding, which creates a new Yes/No (1 or 0) column for each category. For example: Is_Downtown? (1 for Yes, 0 for No).

3. Feature Scaling (Normalization/Standardization)

Numbers in your data can have vastly different scales, which can confuse the model into thinking bigger numbers are more important.

- The Problem: Your dataset has a “Square Footage” column (e.g., 2,500 sq ft) and a “Number of Bedrooms” column (e.g., 3). A model might think square footage is a thousand times more important simply because the number is bigger.

- The Fix: We mathematically “squish” all the numbers down to a similar scale, usually between 0 and 1. Now, 2,500 sq ft might become 0.8, and 3 bedrooms might become 0.5. They are now on an even playing field!

4. Feature Creation (The Creative Part!)

Sometimes, the most predictive information isn’t directly in your data—it’s hidden inside it.

- The Problem: You have the “Year Built” (e.g., 1990) and the “Year Sold” (e.g., 2020), but the model struggles to connect them.

- The Fix: You create a brand new column called “Age at Time of Sale” by subtracting the two (2020 – 1990 = 30 years old). Models love this! A 30-year-old house is a much more direct signal for price than two separate four-digit years.

Practical Use Cases

Feature engineering looks different depending on the industry, but it is used everywhere:

- Spam Detection (Text Data): Raw emails are just paragraphs of text. Feature engineering turns that text into features like “Number of exclamation marks,” “Frequency of the word FREE,” or “Length of the email.”

- Credit Card Fraud (Financial Data): A bank has the timestamps of your purchases. Feature engineering creates a feature called “Time since last transaction.” If you buy a coffee in Mumbai and then try to buy a TV in London 10 minutes later, that new time-based feature will scream “FRAUD!” to the model.

- Healthcare (Patient Data): Instead of just looking at height and weight separately, a data scientist might combine them to create a “Body Mass Index (BMI)” feature, which is a much stronger indicator of certain health risks.

Summary

Feature engineering is where the “science” of machine learning meets “art.” It is the process of cleaning, translating, and combining raw data into powerful signals that an algorithm can easily understand. You can have the most advanced AI model in the world, but without good feature engineering, it won’t perform well. By carefully prepping your ingredients, you set your model up for success!