Data distribution

What is Data Distribution?

Imagine you are looking at the test scores for a new Data Structures and Algorithms quiz you just hosted. If you want to know how well the class did, just looking at the average score isn’t enough. You need to know if most students scored around 80%, or if half the class got 100% while the other half failed.

In Machine Learning, Data Distribution is simply the way data points are spread out across different values. It tells you the “shape” of your data, showing you where values are concentrated, where they are sparse, and if there are any bizarre anomalies.

Connecting to What We Know

In our last step, we looked at the structure of our dataset checking the rows, columns, and finding missing values. Think of that as checking the structural integrity of a house.

Looking at data distribution is the next step: examining the rooms inside the house.

Machine Learning models are highly sensitive to the shape of the data you feed them. Many classic algorithms (like Linear Regression) secretly assume your data will be nicely balanced. If you feed them lopsided data without realizing it, your model will make biased, inaccurate predictions. By understanding the distribution first, we can clean and transform the data so the model can digest it easily.

Step-by-Step: Exploring Data Distribution

Let’s break down how we actually look at the spread of our data, step-by-step:

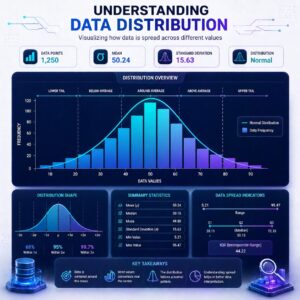

1. Visualizing the Spread (The Histogram)

- What it is: A histogram is a bar chart that groups your data into “buckets” (or bins) to show how often different ranges of values occur.

- In practice: If you plot the heights of 1,000 adults, you’d have a tall bar around 5’7″ (because many people are average height) and very short bars at 4’5″ and 7’0″.

2. Spotting the “Normal” Distribution (The Bell Curve)

- What it is: The most famous shape in statistics. The data is perfectly symmetrical, piling up in the middle and tapering off equally on both sides.

- Real-life example: Human heights, IQ scores, or the sizes of manufactured shoes. The Mean (average) and Median (middle value) sit at the exact same spot in the center.

3. Identifying Skewed Data (The Lopsided Curves)

Often, data isn’t perfectly symmetrical. It gets pulled, or “skewed,” to one side.

- Right-Skewed (Positive Skew): The data is piled up on the left, with a long “tail” stretching to the right.

- Example: Tech salaries. Most engineers might make a standard baseline salary (the pile on the left), but a handful of millionaire founders stretch the tail far to the right, pulling the average up.

- Left-Skewed (Negative Skew): The data is piled up on the right, with a long tail stretching to the left.

- Example: Scores on a very easy Machine Learning test. Most students score between 90 and 100 (pile on the right), but a few students who didn’t study get 30s or 40s, stretching the tail to the left.

4. Finding the Outliers

- What it is: Data points that sit far away from the rest of the distribution.

- In practice: If you are plotting the ages of people watching a coding tutorial, most might be between 18 and 35. If you see a viewer who is 112 years old, that’s an outlier! You have to decide if it’s a typo to delete, or a real data point to keep.

To help you visualize how the shape of the data shifts, try exploring this interactive widget. You can change the distribution type and see how it affects the balance of the data!

Practical Use Cases: Why do we do this?

Understanding the shape of your data is a massive advantage before you start training algorithms:

- Choosing the Right Algorithm: If you notice your data is perfectly normally distributed, you can confidently use algorithms like Gaussian Naive Bayes or standard Linear Regression. If it’s wildly skewed, you might need to use more robust algorithms like Decision Trees.

- Fixing the Skew: If you have right-skewed data (like those tech salaries), it can confuse your model. You can apply mathematical tricks (like a Logarithmic Transformation) to magically squish that lopsided data into a neat, normal bell curve, making it much easier for the model to learn from.

- Filtering Noise: Spotting outliers in the “tails” of your distribution helps you remove bad data before it ruins your model’s accuracy.

Summary

Checking the data distribution is how we understand the “shape” of our dataset. By visualizing the data with histograms, we can see if our values follow a balanced, normal bell curve, or if they are skewed to one side. This step highlights outliers and tells us exactly how we need to prep and transform our data so our machine learning models can learn effectively.

Now that we understand the structure and the shape of our data, would you like to explore the specific techniques we use to fix missing values, or would you prefer to learn how to fix data that is heavily skewed?