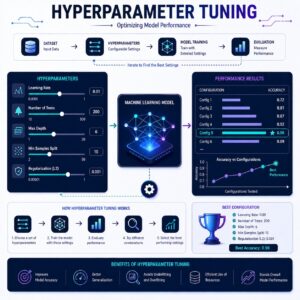

Hyperparameter Tuning

The Simple Definition

Hyperparameter Tuning is the process of adjusting the manual “settings” or “dials” of a machine learning algorithm to get the highest possible accuracy.

Imagine you buy a high-end espresso machine. The machine does the actual brewing (just like an algorithm does the learning), but you have to configure the settings before you press start: how coarse to grind the beans, the water temperature, and the pressure. If the espresso tastes too bitter or too weak, you don’t throw the machine away you tweak the dials. Hyperparameter tuning is how we find the perfect settings for our ML algorithms.

Connecting to What We’ve Built So Far

Let’s look at the journey of your model so far:

- You prepared your data (Data Preprocessing).

- You split it up (Train-Test Split).

- You evaluated it securely (Cross-Validation).

- You diagnosed that the model is either Overfitting (too complex) or Underfitting (too simple).

Now, the big question: How do you actually fix the overfitting or underfitting? The answer: By tuning the hyperparameters. You turn the algorithm’s dials up to add complexity, or turn them down to simplify it.

Step-by-Step: How to Tune a Model

Before we tune, we have to understand the difference between two very similar words in Machine Learning:

- Parameters: These are the rules the model learns on its own by looking at the data (e.g., learning that the word “urgent” usually means an email is spam).

- Hyperparameters: These are the settings you (the engineer) must choose before the training even starts (e.g., telling the model it is only allowed to look at a maximum of 10 words per email).

Here is the logical flow of how we find the best hyperparameters:

Step 1: Choose the Dials (The Search Space)

First, you look at your chosen algorithm and decide which settings you want to adjust. For example, if you are using a Decision Tree algorithm, you might want to adjust its Max Depth (how many branches the tree is allowed to grow).

Step 2: Define the Options

You don’t just guess one number; you give the computer a list of options to try. For example: “Hey computer, try a Max Depth of 3, 5, 10, and 20.”

Step 3: Unleash the Search Algorithms

Manually testing every setting would take forever. Instead, we use specific tuning algorithms to do the heavy lifting:

- Grid Search: The “brute force” method. The computer will systematically test every single combination of the settings you gave it. It is incredibly thorough but can take a lot of computing time.

- Random Search: Instead of testing every combination, the computer tests a random sample of your settings. It is much faster than Grid Search and, surprisingly, often finds a combination that is just as good!

Step 4: Pick the Winner using Cross-Validation

As the Grid Search tests each combination of dials, it uses Cross-Validation to score them. At the end, it hands you back the specific combination of hyperparameters that achieved the highest cross-validation score. You now have your optimized model!

Practical Use Cases

How do engineers use hyperparameter tuning in the real world?

- E-Commerce Recommendations (e.g., Amazon): Algorithms that recommend products have a hyperparameter called the Learning Rate (how fast the model updates its beliefs). Engineers constantly tune this. If the learning rate is too high, buying one pair of socks will make the algorithm think you only want to buy socks forever.

- Credit Card Fraud Detection: Banks use algorithms with a hyperparameter that controls how “sensitive” the model is. Engineers tune this dial meticulously to find the perfect balance between catching real fraudsters and accidentally freezing the accounts of innocent customers buying groceries.

- Real Estate Pricing Models: A model predicting house prices might use an algorithm that can be tuned to ignore “outliers” (like a $50 million mansion in a normal neighborhood). Tuning this threshold prevents the model from skewing the prices of regular homes.

Summary

If a machine learning model is underfitting or overfitting, Hyperparameter Tuning is your primary tool to fix it. While the model learns the parameters from the data, you control the hyperparameters—the manual settings of the algorithm. By using techniques like Grid Search or Random Search combined with Cross-Validation, you can systematically test different combinations of settings until you find the “sweet spot” that yields the most accurate, reliable predictions.