Cross-Validation

The Simple Definition

Cross-Validation is a technique used to evaluate your machine learning model multiple times on different pieces of your dataset. Instead of testing the model just once, you test it repeatedly to ensure its high performance is actually consistent, rather than just a lucky guess.

Think of it like preparing for a technical coding interview. If you do just one mock interview and the interviewer happens to ask the only three sorting algorithms you memorized, you might score 100%. But are you actually ready for the real thing? Probably not. You just got a “lucky” test.

To truly know if you are ready, you need to do multiple mock interviews with different sets of questions. Cross-validation does exactly this for your machine learning models.

Connecting to Previous Concepts

In previous lessons, we covered the Train-Test Split, where we take our dataset and cut it into two pieces: 80% for training and 20% for testing. We also learned about Overfitting when a model memorizes the data instead of learning the patterns.

But the standard Train-Test Split has a hidden flaw: What if by pure chance, all the “easy” data ends up in the test set? Your model might score 95% accuracy, tricking you into thinking it’s perfect, when in reality, it’s overfitting to a very specific, lucky batch of data.

Cross-validation is the model improvement algorithm we use to eliminate this “luck” factor.

Step-by-Step: How K-Fold Cross-Validation Works

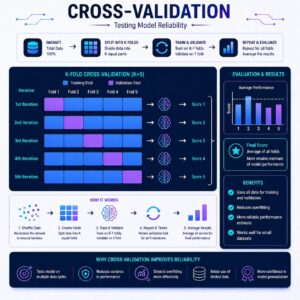

The most popular algorithm for this is called K-Fold Cross Validation. The “K” simply stands for the number of pieces you want to chop your data into. Here is the logical flow if we choose $K = 5$ (a 5-Fold Cross-Validation):

- Chop the Data: The algorithm divides your entire dataset into 5 equal-sized chunks (called “folds”).

- Iteration 1: It holds out Chunk 1 to act as the test set. It uses Chunks 2, 3, 4, and 5 to train the model. It records the test score.

- Iteration 2: It holds out Chunk 2 to act as the test set. It uses Chunks 1, 3, 4, and 5 to train. It records the score.

- Repeat the Cycle: It continues this process until every single chunk has had exactly one turn being the test set.

- Calculate the Final Grade: You now have 5 different test scores. The algorithm averages them together to give you the true, final accuracy of your model.

If the average score is high, you can confidently deploy your model knowing it handles all types of data well!

Practical Use Cases

Where is cross-validation absolutely essential in the real world?

- Medical Datasets: In healthcare, datasets are often very small (e.g., data from only 200 patients with a rare condition). You can’t afford to waste 20% of that precious data permanently sitting in a test set. Cross-validation allows you to train and test the model using all the available data efficiently.

- Financial Forecasting: Stock market data varies wildly. If your test set randomly only included data from a “bull market” (when stocks are going up), your model would fail in a crash. Cross-validation ensures the model is tested against all periods of market volatility.

- Spam Detection: Spammers constantly change their tactics. Cross-validating a spam filter ensures the model hasn’t just memorized one specific type of phishing email, but can generalize to recognize a wide variety of malicious patterns.

Summary

While a simple Train-Test split is a great starting point, it leaves your model vulnerable to the “luck of the draw.” Cross-Validation (specifically K-Fold) systematically divides your data into chunks, rotating which chunk is used for testing and which are used for training. By averaging the results of these multiple tests, you get a highly reliable, realistic metric of how well your model will perform in the real world, ensuring you haven’t accidentally overfitted your algorithm.