Overfitting vs. Underfitting

The Simple Definition

In Machine Learning, Model Improvement is the process of adjusting your algorithm so it makes highly accurate predictions on entirely new, unseen data. The biggest hurdle in this process is finding the right balance between a model that is too simple (Underfitting) and a model that is overly complex (Overfitting).

Think of it like studying for a technical interview. You want to learn the underlying problem-solving concepts, not just skim the textbook, nor memorize the exact code for a specific practice problem.

Connecting to What We’ve Built So Far

In our previous modules, we walked through Python basics, cleaned our raw information using data preprocessing, and finally split our data into a train-test split.

The train-test split is our diagnostic tool. We train the model on the “Training Set” and test its real-world readiness on the “Test Set.” When we look at those test results, we often realize our model isn’t perfect yet. Diagnosing why it’s struggling whether it’s underfitting or overfitting is the first step in the model improvement process.

Step-by-Step: Diagnosing the Model

Let’s break down the two main problems your algorithms will face and how to spot them.

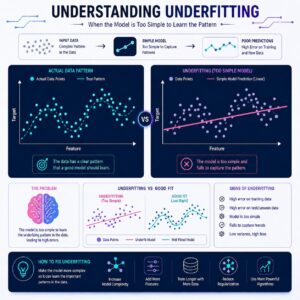

1. Underfitting (The “Too Simple” Model)

What it is: Underfitting happens when a model is too basic to capture the patterns in the data. It performs poorly on the training data and poorly on the test data.

- The Real-Life Example: Imagine a student who barely studies for their software engineering interview. They don’t understand the core concepts of algorithms. As a result, they fail the mock interviews (the training data) and they fail the actual interview (the test data).

- How to fix it: Make the model more complex! Give the algorithm more features to study, increase its training time, or switch to a more powerful algorithm.

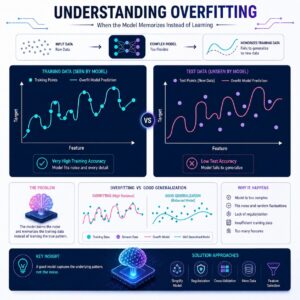

2. Overfitting (The “Too Complex” Model)

What it is: Overfitting happens when a model learns the training data too well. It memorizes the noise, the outliers, and the exact details of the training set instead of learning the general trends. It will get a near-perfect score on the training data, but perform terribly on the test data.

- The Real-Life Example: Imagine a student who memorizes the exact, line-by-line solution to a specific LeetCode problem. They score 100% on that exact practice test. But, when the interviewer changes one minor detail about the problem (the test data), the student completely freezes because they memorized the answer instead of learning the logic.

- How to fix it: Simplify the model. We use specific Model Improvement Algorithms to pull the model back. Techniques like Regularization penalize the model for being too complex, while Pruning cuts away unnecessary branches in decision trees.

3. The Optimal Fit (The “Goldilocks” Zone)

The goal of model improvement is to land right in the middle. An optimally fitted model understands the underlying patterns well enough to perform great on the training data, and applies that logic beautifully to ace the test data.

Practical Use Cases

Where does balancing this trade-off matter in the real world?

- Self-Driving Cars: If a car’s vision algorithm underfits, it might not recognize a stop sign at all. If it overfits, it might only recognize a stop sign if it looks exactly like the pristine, unbent stop sign it saw in its training data, completely ignoring a stop sign with a scratch on it.

- Resume Screening: A model designed to flag good technical resumes will fail if it overfits. It might learn that “every good candidate in the training data went to University X,” and wrongfully reject brilliant self-taught developers or graduates from other schools.

- Medical Diagnosis: An overfitting model might memorize that a specific artifact on an X-ray machine (like a dust speck on the lens) means a patient is sick, rather than actually analyzing the biological markers.

Summary

Building a machine learning model is an iterative process. After your train-test split, you must diagnose your model’s performance. If it does poorly across the board, it is underfitting (too simple). If it aces the training data but fails the test data, it is overfitting (too complex and memorized). By using model improvement techniques to add or remove complexity, you can guide your algorithm to the optimal fit, ensuring it is ready for real-world predictions.