Feature scaling

The Simple Definition

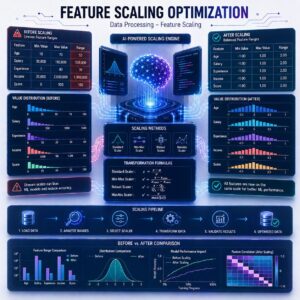

Feature Scaling is the process of forcing all the numbers in your dataset to speak the exact same mathematical language by shrinking them down to a similar scale.

Without feature scaling, algorithms get easily confused by big numbers and will unfairly ignore the smaller numbers.

The Problem: Why Do We Need This? (Step-by-Step)

To understand why this step is critical, let’s look at how a computer’s “brain” works when comparing features.

- The Scenario: You are building an ML model to predict house prices. Your clean dataset has two numerical features:

- Number of Bedrooms: Usually ranges from 1 to 5.

- Square Footage: Usually ranges from 1,000 to 5,000.

- The Flaw: Machine Learning algorithms are essentially giant math equations. They don’t know what a “bedroom” or “square foot” is; they only see raw numbers.

- The Result: Because 5,000 is a massively larger number than 5, the algorithm will mathematically assume that Square Footage is 1,000 times more important than the Number of Bedrooms. It will completely ignore the bedroom data simply because the numbers are smaller!

Feature scaling fixes this by shrinking both the “Bedrooms” column and the “Square Footage” column down to the exact same size, leveling the playing field so the algorithm can learn fairly.

Method 1: Normalization (The Strict Box)

Normalization (often called Min-Max Scaling) is a technique that takes your data and squeezes it into a tiny, strict box—specifically, a scale between 0 and 1.

- How it works: The smallest number in your column becomes exactly 0. The largest number becomes exactly 1. Every other number becomes a decimal in between based on its percentage.

- Real-Life Example: Imagine grading a test out of 200 points. A student scores 150. If we normalize that score to a 0-1 scale (essentially calculating a percentage), their score becomes 0.75.

- When to use it: Normalization is fantastic when you know the exact boundaries of your data. For example, in Computer Vision, image pixels always have a color value strictly between 0 and 255. Normalizing them to a 0-1 scale makes image-processing algorithms run lightning fast.

Method 2: Standardization (The Bell Curve)

Standardization (often called Z-Score Scaling) is a bit more flexible. Instead of locking the data into a strict 0-1 box, it centers the data around the number 0.

- How it works: It takes the average (the mean) of your column and turns that average into 0. If a data point is exactly average, it is a 0. If it is larger than average, it becomes a positive number (like +1 or +2). If it is smaller than average, it becomes a negative number (like -1.5).

- Real-Life Example: Think of standardized testing, like an IQ test. The average score is centered at 100. If you score 115, you are one “standard step” above average. Standardization does this exact math, converting the average to 0 and showing how far away everything else is.

- When to use it: Standardization is usually the “go-to” default for most Machine Learning algorithms. It handles data beautifully, and unlike Normalization, it doesn’t break if a weird, unexpected outlier sneaks into your dataset.

Summary

Feature Scaling ensures that large numbers don’t unfairly bully small numbers in your dataset. Normalization squeezes your data into a strict scale between 0 and 1, which is great when your data has hard, predictable boundaries. Standardization centers your data around an average of 0, making it the most robust and common choice for teaching Machine Learning models how to compare apples to oranges.