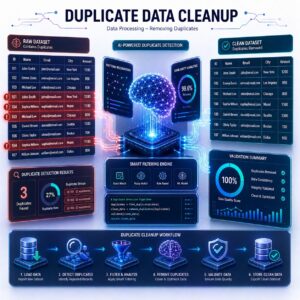

Removing duplicates

The Simple Definition

A Duplicate is simply a row in your dataset that is an exact, identical copy of another row.

Removing Duplicates (often called deduplication) is the process of scanning your dataset, finding these identical twins, triplets, or quadruplets, and deleting the extras so that only one unique record remains.

Why Are Duplicates a Problem? (Connecting to ML Concepts)

In previous lessons, we learned that a Machine Learning algorithm is like a student studying a textbook (the dataset) to find patterns.

Imagine you are studying for a math test, and your teacher gives you a practice sheet with 100 questions. However, 80 of those questions are the exact same addition problem: “2 + 2 = 4”.

If you study that sheet, you aren’t going to get smarter at math; you are just going to become incredibly biased toward answering “4”. Machine Learning models do the exact same thing. If a Supervised Learning model sees the same piece of data repeated 50 times, it will over-prioritize that specific pattern. It stops learning and starts memorizing, which ruins its accuracy when you deploy it into the real world.

How Do Duplicates Happen? (A Real-Life Example)

You might wonder, “How does the exact same data get recorded multiple times?” It is almost always a human error or a system glitch.

Let’s say you are working on a data science project analyzing movie ratings. Your dataset contains thousands of reviews from users, and you want to predict which genres are the most popular.

- The Glitch: A user watches Inception and rates it 5 stars. They click “Submit Review,” but their internet lags. Thinking it didn’t work, they mash the “Submit” button three more times.

- The Result: The database records that exact same 5-star review four times. You now have four identical rows: Same user ID, same movie, same rating, same timestamp.

- The Impact: If you don’t remove those extras, your ML model will analyze the data and falsely believe that four separate people loved the movie, heavily skewing your final predictions!

How We Handle It (The Practical Step)

Unlike missing values, which require you to make tough statistical choices (like whether to drop or impute), removing duplicates is beautifully simple.

When you load your data into a Pandas DataFrame (like we discussed a few lessons ago), the library has a built-in magic trick. You simply tell Pandas to look for duplicate rows. It scans the entire spreadsheet in milliseconds, keeps the first version of the row it sees, and instantly deletes all the identical copies. It is a quick, one-and-done cleaning step.

Practical Use Cases

Every industry has to scrub duplicates before training their algorithms. Here is how it looks in the real world:

- E-Commerce & Retail: When companies merge two different databases together (like bringing last year’s sales data into this year’s system), customers often get imported twice. Removing duplicates ensures a model doesn’t predict that a customer will buy twice as much coffee as they actually do.

- Social Media Sentiment Analysis: If an ML model is analyzing Twitter (X) data to see if people like a new product, it might accidentally scrape the exact same “Retweet” 10,000 times. Deduplication ensures the model counts that as one original thought, rather than 10,000 separate happy customers.

- Healthcare Records: If a patient is registered twice in a hospital’s system due to a clerical error, an ML model predicting resource needs might falsely assume there are two separate patients requiring two separate beds.

Summary

Removing duplicates is a vital Data Preprocessing step where we delete identical, repeating rows from our dataset. We do this because feeding an algorithm the same example over and over causes it to become biased and memorize data rather than actually learning the underlying patterns. Thanks to tools like Pandas, cleaning these system glitches takes just a fraction of a second!